Decolonising Digital Rights: Why It Matters and Where Do We Start?

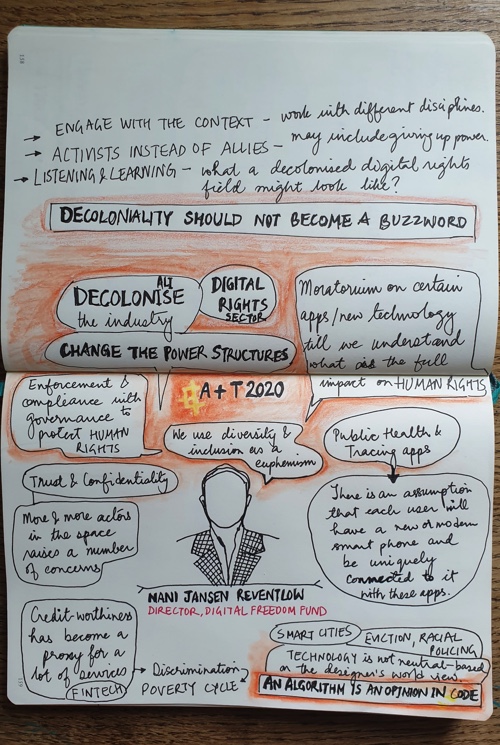

This speech was given by DFF director, Nani Jansen Reventlow, on 9 October as the keynote for the 2020 Anthropology + Technology Conference.

The power structures underlying centuries of exploitation by one group of another are still here.

Besides the fact that we, in reality, still have over 60 colonised territories around the world today, maintained by 8 countries (though the UN General Assembly would disagree with that number), colonisation has taken on many different forms, including in and through technology.

What does this mean for our societies? What would things look like if they were different? How do we get there –– or: how do you decolonise society? How do you decolonise technology? And how do you decolonise digital rights?

I will start this talk with a spoiler: I will not be able to provide you with an answer to these fundamental questions. What I will try to do in the next half hour, is tell you something about the problems we at the Digital Freedom Fund are seeing in Europe when it comes to digital rights and what is often euphemistically referred to as “diversity and inclusion“.

I will also tell you about what we are doing to try to set in motion a process to fundamentally change the power structures in the field that works on protecting our human rights in the digital context.

But before we get there, we first need to take a look at what the problem is and why we have it.

What’s the problem?

Today’s conference is centred around “championing socially responsible AI,” but: what does this mean? From a human rights perspective, many AI-related digital rights conversations tend to focus on the right to privacy and data protection. In doing so, these conversations often miss the full extent of the social impact new technologies can have on human rights. This is one of the reasons why it is so important that we decolonise the digital rights space and encourage an intersectional approach to AI and human rights issues.

Let me illustrate some of the issues in the context of the themes of this conference, starting with:

Health tech

The implications of health tech on individuals is a prominent conversation at the moment as we are facing the COVID-19 pandemic.

Big Data solutionism is pervading coronavirus responses across the globe, with contact tracing, symptom checking, and immunity passport apps being rolled out at rapid speed.

These technologies are often not properly tried and tested, and it is clear that privacy and data protection often has not been front and centre for those developing the technology, let alone other human rights considerations. They also illustrate the degree to which our technology and the way we deploy it is colonised.

The UK, for example, launched a “Test and Trace” system in England and Wales in May this year, without, it later admitted, having properly conducted a data protection impact assessment. This admission came after the NGO Open Rights Group had threatened legal action. The heavily criticised app was abandoned and, since late September, a new one is available, which addresses some of the privacy concerns previously raised.

But: is privacy the only issue we should be looking at when considering the viability of using tracing apps to combat a public health emergency? Limiting our analysis to privacy and data protection alone results in blind spots on many of the broader issues at play, such as discrimination and access to healthcare. A few examples.

To be able to download and use the app, you need to have a relatively new phone, with the right operating system installed. This means that those who are unable to afford or don’t have direct access to technology are excluded.

There also is an assumption that each user would be uniquely linked to a phone. And of course, in order to download the app and receive warning and notifications, you will have to be online.

To put it simply: the effectiveness of the app is based on an assumption that the “average person” in society is the exclusive owner of a new smartphone with reliable access to the internet. Researchers at Oxford University have estimated that more than half the population of a country would need to make use of a tracing app in order for it to be effective.

This raises the question: what happens to the “less than half” of the rest of the population that does not or cannot make use of the app, and how does the automation of disease control affect their vulnerability? Should we want to use an app at all if it is not effective for the protection of everyone in our society?

This raises the question: what happens to the “less than half” of the rest of the population that does not or cannot make use of the app, and how does the automation of disease control affect their vulnerability?

Concerns around health data, however, are not new to the COVID-19 context. The UN Special Rapporteur on privacy has recognised that medical data is of “high value” for purposes such as social security, labour, and business. This means that stakeholders such as insurance companies and employers have a considerable interest in health-related data.

Many health services are built on the values of trust and confidentiality. But as more and more actors move into the health data space, these values are fading more and more out of focus.

A failure to protect health data can engage the rights to life, social protection, healthcare, work, and non-discrimination. Very concretely, it may deter individuals from seeking diagnosis or treatment, which in turn undermines efforts to prevent the spread of, say, a pandemic.

The access Home Office immigration officials are given to check entitlement to health services as part of the UK government’s “hostile environment” policy is a clear example of this, but even in “lower risk” settings, a person might think twice about getting medical help if the potential repercussions of sensitive health information ending up in unwanted hands are sufficiently grave.

FinTech

It is said that health cannot be bought, but with that wisdom in mind let us turn to the second conference theme, which is FinTech.

Here too, with the ever-increasing automation of financial services, it is not only the right to privacy that is under threat.

Access to financial services, such as banking and lending, can be a decisive factor in an individual’s ability to pursue their economic and social well-being. Access to credit helps marginalised communities exercise their economic, social, and cultural rights.

Access to financial services, such as banking and lending, can be a decisive factor in an individual’s ability to pursue their economic and social well-being

Muhammad Yunus, social entrepreneur and Nobel Peace Prize winner, has gone so far as saying that access to credit should be a human right in and of itself. “A homeless person should have the same right as a rich person to go to a bank and ask for a loan depending on what case he presents.”

In reality, automation in the financial sector often polices, discriminates, and excludes, thereby threatening the rights to non-discrimination, association, assembly, and expression; individuals may not want to associate with certain groups or express themselves in certain ways for fear of how it will impact their creditworthiness.

This policing, discrimination, and exclusion can also have an impact on the right to work, to an adequate standard of living, and the right to education.

Cathy O’Neil has noted that creditworthiness has become an “all-too-easy” stand in for other virtues. It is not just used as a proxy for responsibility and “smart decisions”, it is also a proxy for wealth. Wealth, in turn, is highly correlated with race.

While the FinTech narrative is that it works with “unbiased” scoring algorithms that are blind to characteristics such as gender, class, and ethnicity, research shows a different picture.

While the FinTech narrative is that it works with “unbiased” scoring algorithms that are blind to characteristics such as gender, class, and ethnicity, research shows a different picture

Many of these “modern” algorithms make their decisions based on historic data and decision patterns, which has led to the coining of the term “weblining,” to show how existing discriminatory practices that were operationalised in the US in the 1930s with the practice of “redlining” to keep African American families from moving into white neighbourhoods, are now replicated in new technology.

Those who can afford to, can hire consultants or go live in certain neighbourhoods to boost their credit scores. In the meantime, those living in poverty are refused loans, often on a discriminatory basis, and even targeted because of their credit scores for pay-day-loans and other online advertisements that can plunge them further into poverty.

This illustrates that this is more than a question of privacy: it is a question of livelihood.

It doesn’t stop there. Credit scores are even used to make decisions about a person outside of the financial services sector, such as hiring or promoting individuals at work. Conversely, other proxies are increasingly being used as stand ins for creditworthiness. O’Neil has explained how this has contributed to a “dangerous poverty cycle”.

With datasets recording nearly every aspect of our lives, and these data points being relied on to make decisions on us as employees, consumers, and clients, we are being labelled as targets or dispensables. Our occupations, preoccupations, salaries, property values, and purchase histories result in us being labelled as “lazy,” “worthless,” “unreliable”, or a risk, and that label can carry across many different aspects of our lives.

Smart cities

I will be brief about smart cities because I actually don’t think the question of making cities “smarter” through technology is worth having unless we are talking about the need to completely reinvent the urban design that has historically and systemically harmed people of colour, people living in poverty, people with disabilities, and marginalised groups.

Smart city design runs the risk of automating and embedding assumptions on how we run and manage cities that are less about people, and more about profit and exclusion, as well as vague, unsubstantiated notions of “efficiency”.

Smart city design runs the risk of automating and embedding assumptions on how we run and manage cities that are less about people, and more about profit and exclusion

Smart city initiatives in India have led to mass forced evictions, and in the United States the question should be asked if smart city design to “reduce neighbourhood crime” isn’t a euphemism for increased surveillance, with all the racialised policing that comes with it.

The really interesting question here is how we can use AI to help fix these inequalities and harms, but that is not usually the focus of many of the current smart city debates.

Why do we have this problem?

We just looked at some of the manifestations of the problem of colonised technology. So: what causes us to have this problem in the first place?

First, there is a common and incorrect assumption that technology is neutral. However, the apps, algorithms, and services we design ingrain choices made by its creators. It replicates their preferences, their perceptions of what the “average user” is like, what this average user would want or should want to do with the technology. Their design choices are based on the designer’s world view and therefore also mirrors it.

As someone said to me the other day: “an algorithm is just an opinion in code.” When those designers are predominantly male, privileged, able-bodied, cis-gender, and white, and their views and opinions are being encoded, this poses serious problems for the rest of us.

This relates to the second cause, which is that Silicon Valley has a notorious “brogrammer” problem. When you look at any graph reflecting the makeup of Silicon Valley, where most of our technology here in Europe comes from –– and this is a problem in and of itself, as technology developed from a white, Western perspective is deployed around the world – this is easily visible.

For professions such as analysts, designers and engineers the numbers for Asian, Latina, and Black women decrease as role seniority increases

For professions such as analysts, designers and engineers the numbers for Asian, Latina, and Black women decrease as role seniority increases. Often to the point they literally become invisible on the graph because their numbers are so small.

Analysis of 177 Silicon Valley companies by investigative journalism website Reveal showed that ten large technology companies in Silicon Valley did not employ a single black woman in 2018, three had no black employees at all, and six did not have a single female executive.

This should make it less surprising that, for example facial recognition software built by these companies is predominantly good at recognising white, male faces.

However, a predominantly male, white, and able-bodied workforce is not the only thing that factors into technological discrimination.

A third cause, which we already touched upon in the context of FinTech, is that technology is built and trained on data that can already reflect systemic bias or discrimination. If you then use those data to develop and train new software, it is not surprising that this software will be geared towards replicating those historical data. Technology based on data from a racist, sexist, classist, and ableist system, will provide outcomes that reflect that racism, sexism, classism, and ableism. Unless a conscious effort is made to get the system to make different choices, systems built on such data will replicate the historical preferences it has been fed.

Finally, we don’t work consistently with interdisciplinary design teams. Engineers will build technology to certain specifications, and those will have systemic biases baked into them; you can have all the non-male, non-white, non-ableist engineers you can think of, but it will not be up to them (alone) to solve the systemic problems.

If society is sexist, racist, and ableist, so will the AI it develops be. It is unhelpful to focus on the technology alone as it negates the political and societal systems in which it is developed and operates.

There is a role for social scientists and others at crucial stages of the design and decision-making process; developing suitable AI is not just a task for engineers and programmers

An interdisciplinary approach in developing AI systems is therefore crucial. There is a role for social scientists and others at crucial stages of the design and decision-making process; developing suitable AI is not just a task for engineers and programmers.

What can we do about it?

We obviously have a large-scale problem on our hands and the monster we created won’t easily be put back in its cage. There are, however, a number of things we can do, both in the shorter and longer term.

- Push for moratoriums on new technologies until we understand their social impact, particularly on human rights. The call for a ban on the use of facial recognition technology has gained more traction following the Black Lives Matter protests, with several tech giants putting a hold on –– part of –– their products in this area. This should be a guiding principle: unless we know and understand in full what the human rights impact of new technology is, it should not be developed or used.

- We also need to have the debate on where to draw the so-called “red lines“ on where AI technology should not be used at all. This conversation needs to be people-centred; the individuals and communities whose rights are most likely to be violated by AI are those whose perspectives are most needed to make sure the red lines around AI are drawn in the right places.

- When we analyse the impact of new technologies, this needs to be done from an intersectional perspective, not only on how it affects privacy and data protection. As we saw earlier, breaches of privacy are often at the root of other human rights violations. Companies and governments need to be held accountable for these violations and they need to work with multidisciplinary teams that include social scientists, academics, activists, campaigners, technologists, and others to prevent violations from occurring in the first place. This work also needs to be done closely and consistently with affected groups and individuals to understand the full extent of the impact of AI-driven technologies.

- We need to push for enforcement and compliance with existing legislation protecting human rights. There often is a call for new regulation, but this seems to conveniently forget that we actually have existing international and national frameworks that set clear standards on how our human rights should be respected, protected, and fulfilled. This is also a healthy antidote to the fuzzy “ethics” debate companies would like us to have instead of focusing on how their practices can be made to adhere to human rights standards.

- Finally, we need to not only decolonise the tech industry: we also need to decolonise the digital rights field. The individuals and institutions working to protect our human rights in the digital context clearly do not reflect the composition of our societies. This leaves us with a watchdog that has too many blind spots to properly serve its function for all the communities it is supposed to look out for.

At the outset, I referred to “diversity and inclusion” as a euphemism. I did that, because that is not enough to make the change that needs to happen.

Instead of focusing on token representation, we need to change the field on a structural level

Instead of focusing on token representation, which essentially treats the current status of the field as a pipeline problem, we need to change the field on a structural level; we need to change its systems and its power structures.

This is something that is fundamentally different from “including” those with disabilities, from racialised groups, the LGBTQI+ community, and other marginalised groups in the existing, flawed ecosystem.

Here we return to the big question raised at the beginning of this talk: how do you decolonise a field? The task of re-imagining and rebuilding the digital rights field is clearly enormous. Especially since digital rights cover the scope of all human rights and therefore permeate all aspects of society, the field does not exist in isolation.

Here we return to the big question raised at the beginning of this talk: how do you decolonise a field?

We can therefore also not solve any of these issues in isolation either –– there are many moving parts, many of which will be beyond our reach to tackle, even if we at the Digital Freedom Fund are working with a wonderful partner in this, European Digital Rights (also known as EDRi).

But: we need to start somewhere, and we need to get the process started with urgency as technological developments will continue at a rapid pace and we need a proper watchdog to fight for our rights in the process.

We started earlier this year with a process of listening and learning. Over the past months, we have had conversations with over 30 individuals and organisations that we are currently not seeing in the room for conversations about digital rights in Europe.

Over the past months, we have had conversations with over 30 individuals and organisations that we are currently not seeing in the room for conversations about digital rights in Europe

We asked about their experience working on digital rights, what their experience has been working with digital rights organisations, and what a decolonised digital rights field might look like and what it might achieve.

We also started collecting and reading literature about decolonising technology and other fields, to start developing a possible joint vision we can work towards.

As these conversations continue, we are starting similar conversations with the digital rights field to learn what their experience has been working on racial and social justice issues, working with partners in this field, and also what their vision of a decolonised digital rights field might look like.

The next step is an online meeting in December of this year to (a) connect different stakeholders and (b) receive input on what the next step in the process, which we are referring to as a design phase, should look like. This design phase, which we hope to start next year, should be a multi-stakeholder effort to come to a proposal for a multi-year, robust programme to initiate a structural decolonising process for the field.

Much has changed since we first started talking about the need to decolonise the digital rights sector two years ago.

This is on the one hand encouraging and on the other hand the threat of “decoloniality” becoming yet another buzzword that people like to use but not practice looms large

The recent international Black Lives Matter protests have done a lot to boost awareness about systemic racism. This is on the one hand encouraging and on the other hand the threat of “decoloniality” becoming yet another buzzword that people like to use but not practice looms large.

The irony of our work now being of interest to many –– the media, policymakers, funders… –– now that it has been validated because the “white gaze” became captivated by racial justice protests amidst the boredom of a global lockdown, is also not lost on me.

That being said, the current mood does illustrate how necessary this work is, not only in the digital rights space, but everywhere in our society. And the more of these processes we can set in motion, the better a world we will be creating for all of us.